Auteur: Kimberly Snoyl ● kimberlysnoyl@gmail.com

Last October, I had the opportunity to attend the first AutomatonSTAR conference in München, Germany, organized by the EuroSTAR team. I was invited to speak on Automatic Usability Testing, since I had won the RisingSTAR award last June at EuroSTAR.

It was a two-day conference, where 25 global experts in testing shared their knowledge. There was a German track and an English track, but since my knowledge of German language is not so good, I only attended the English presentations. In this blog I would like to share my highlights of the conference with you.

Workshop on Softskills of Automation

The first day of the conference started with a workshop by Jenny Bramble, called: ‘Soft skills of Automation’. The first part of the workshop was about the question ‘What is automation?’.

Soft skills are how you interact with other people. The soft skills of automation are the ways you interact with features outside of writing code.

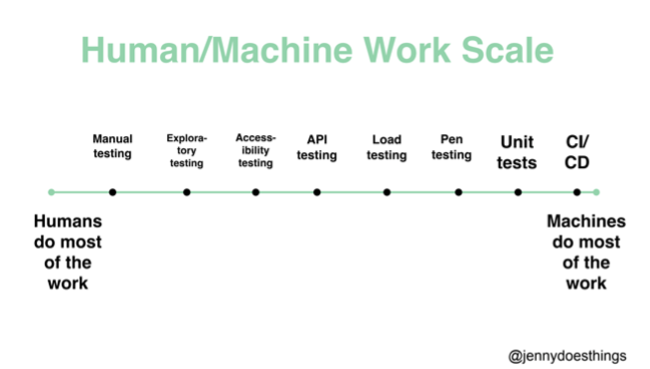

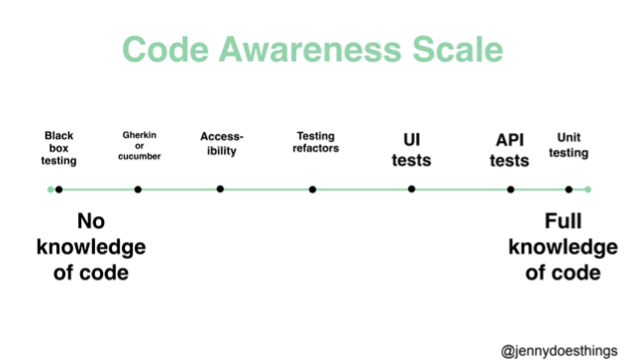

In the workshop we had to put ourselves and/or different kinds of tests on different scales, for example the Human/Machine scale and the code awareness scale. The manual testing mindset can help a lot with automation, because you can’t test something you don’t understand. Manual testing is about trying to understand the product, but it is important to know how to read and understand the code as a tester: ‘The more comfortable we are with the code, the more prepared we are to test the code’. So: learn to code or at least learn to read code to understand how it works and how the product probably act. Learn to work with Git and the software development life cycle. Dive into logs and analytics and most of all: review unit tests!

The second part of the workshop was about CAN a test be automated, versus SHOULD it be automated. I learned that we should not automate everything that CAN be automated. Think about if it actually SHOULD be automated and if it brings valuable output. Do not hoard tests but clean up your testcases once in a while. Think about the return on investment. Automation lets you be a bit more lazy but beware: all automated tests also need to be maintained.

Software testing wave

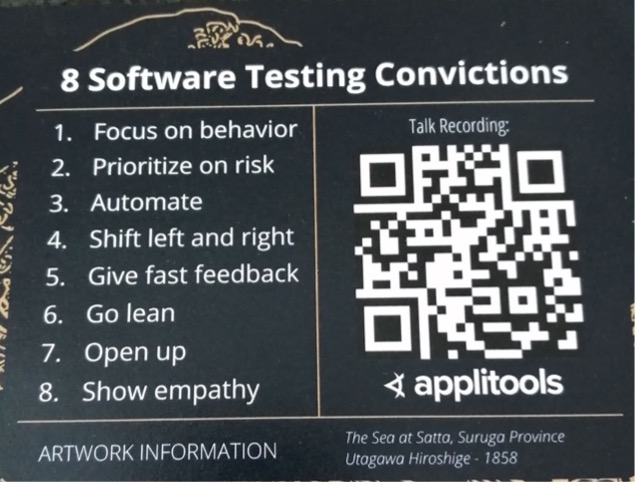

by Andrew (Pandy) Knight: Automationpanda.com/BDD | TAU: Test automation university (free courses!)

There is a recording of this talk, so I will not dive into this talk too much. This talk was about Andrews personal software testing convictions: what does he find important when testing and what is the best way to go about testing and software development according to himself. The thing that was most enlightening to me in this talk was that I finally understood what people mean when they say ‘shift right’. They never mean ONLY test in production, but rather shift left AND right: test as early as possible, but ALSO keep monitoring after release and learn. That makes sense!

Do we really need a test automation engineer?

This talk by Bas Dijkstra was one of my favorite presentations of AutomationSTAR 2022. I had not met or heard presentations of Bas before, but I would highly recommend them. Not only because of the fact that there were a lot of jokes about the movie Office Space during the presentation, but it was also a great story about his experience and the learnings of the past years.

Project after project again he realized that writing automation after a product is already built, just doesn’t work. There needs to be communication about what and how to automate. There needs to be a strategy.

What I also found enlightening, was that he said that automating the GUI, something that most people, including myself, start with when they get into automation, actually is the hardest part! (Talking about flaky tests!)

He referred to blogs written by Blake Norris: Don’t automate test cases and stop automating your regression tests, but start automating during the development sprint. Of course, this is not always possible when you get into a legacy project.

He also talked about the term ‘Manual Testers’ being strange, since those testers are not testing manuals…

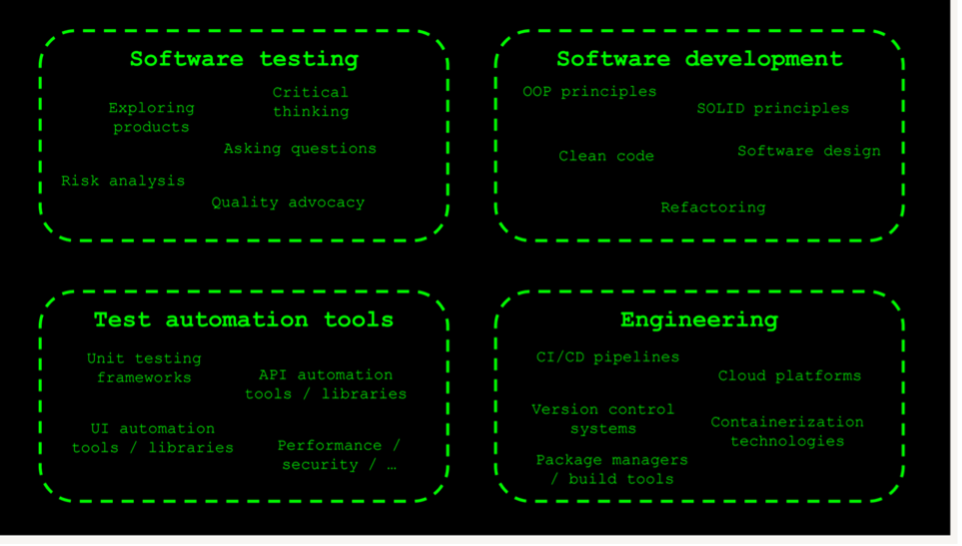

He talked about how test automation engineers are actually four roles pressed in one:

These people become mediocre in their job, while they would have the room to become excellent if they would focus on one role. With this SDET role (Software Development Engineers in Test), you get developers who are not interested in testing and also enforce silos instead of collaboration. Test automation is not a role, it is a joint effort:

- Start with pair programming (synchronous), instead of code reviews (asynchronous).

- Let there be a Quality engineer on the team, who is responsible for quality in all its aspects.

Automating Biases

I personally like this ‘soft skills’ talk by Dermot Caniffe on a test automation conference. I know quite a lot about biases already, being a UX researcher, but it is good to freshen them up.

This talk was about cognitive biases: Subconscious errors which make us misinterpret information affecting our rationality & accuracy of decisions and judgements. They are shortcuts of our brain. These biases become part of our testcases and testautomation, since we automate requirements which are subjectively perceived by the Automator. We are automating our beliefs, rather than the objective requirements.

Examples:

- Confirmation bias: We find what aligns with our beliefs.

Solution: debug our thought processes into the tests: talk to someone about your test cases, bounce ideas and get feedback. Score on complexity of the component vs. test effort (coverage) - Framing effect: framing options positively is more desirable then framing them negatively. E.g., if you think that bugs are bad: less bugs will be found. If you think bugs are good: there is more chance of finding false positives.

Solution: Use neutral terminology, incentify defect identification. - Availability heuristic: things that come to mind easily are considered more important: recency effect. The last time you encountered a problem is more available in your brain.

Solution: use more than one source of information/more people (BDD). - Survivorship Bias: focused only on ‘fit’ input, e.g., user reported bugs.

Solution: automate knotty workflows (complexity). Assume you need more information, more data, from more users and other stakeholders. - The curse of knowledge: assuming everyone has the same knowledge you have. Not testing obvious things.

Solution: create temporary amnesia, interview actual newcomers, get as many voices involved in requirements as possible.

So how can we prevent automating our biases?

- It’s good to talk: communication is key. Collaboration is even better.

- Reframe failure: normalize failure. Make sure you create an environment where it is safe to fail (psychological safety at work).

- Normalize backtracking on ‘bad thinking’ = falling for biases.

Principles of Testing

The conference closing keynote was by Jenny Bramble, who opened the conference with her workshop as well. This keynote was on the Principles of Testing, much like Andrew Knight’s software testing convictions.

Jenny’s general code of ethics is simple: ‘Be nice’. The way we communicate as testers influences the culture on the team. When we say ‘yay!! I found a bug’ or ‘I am here to break things’, these are attacking phrases, even when you say them playfully. Be collaborative and have positive interactions with your team.

The second message was: Spend time thinking about principles that push you forward (introspection). Knowing yourself and why you do things can help you focus. In this talk we were simulated to write down our own principles.

Since I am not a tester anymore, I try to keep my principles not specifically about my job title, but about my job in general:

- Do what gives me energy at a certain moment: sometimes that is prototyping, sometimes that is writing a blog. Sometimes that is being inspired by others or inspiring others by going to and speaking at conferences!

- Improve people’s experiences/life: I want people to be able to use the products I design as efficiently as possible, so people spend less time on applications and more time on the things that really matter in life.

- Continuous learning, continuous improvement: I love the sprint retrospective, especially in my current team where we all are eager to improve the process and our collaboration. Nobody’s perfect, and we take the time to review our past and try to be better in the future.

- Be curious: ask questions, get to know others, and share knowledge.

- Innovate and contribute: I have lots of ideas and I would love to contribute to the field (e.g. the idea that won the RisingSTAR award).

All in all the first edition of AutomationSTAR was a great success. I was inspired and had a chance to meet some wonderful people. This is my second time at a EuroSTAR conference and I feel I am becoming part of the family ❤️. Next up is EuroSTAR in Antwerp, June 2023!

één reactie